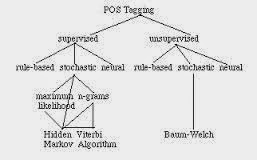

Supervised and unsupervised training with Hidden Markov Models

Hidden Markov Models in Supervised and unsupervised training The probabilistic models used by Church and DeRose in the papers just cited were Hidden Markov Models (HMMs), imported from the speech recognition community. An HMM describes a probabilistic process or automaton that generates sequences of states and parallel sequences of output symbols. Commonly, a sequence of output symbols represents a sentence of English or of some other natural language. An HMM, or any model, that defines probabilities of word sequences (that is, sentences) of a natural language is known as a language model. The probabilistic automaton defined by an HMM may be in some number of distinct states. The automaton begins by choosing a state at random. Then it chooses a symbol to emit, the choice being sensitive to the state. Next it chooses a new state, emits a symbol from that state, and the process repeats. Each choice is stochastic – that is, probabilistic. At each step, the automaton makes its choice at ra...